Let’s make it work for you

Overview

This comprehensive course offers an in-depth exploration of Microsoft Fabric, a unified analytics platform. Participants will learn the essentials of end-to-end analytics, starting with foundational concepts and progressing to advanced functionalities. The course covers the design and use of lakehouses, data pipelines, Apache Spark integration, data warehousing, and Power BI semantic modeling. Additionally, it emphasizes real-time intelligence, advanced query techniques, and robust security practices. With a focus on scalability, performance optimization, and administrative management, this course equips data professionals with the skills necessary to implement and manage analytics solutions effectively using Microsoft Fabric.

Audience Profile

The primary audience for this course is data professionals with experience in data modeling and analytics. DP-600 is designed for professionals who want to use Microsoft Fabric to create and deploy enterprise-scale data analytics solutions.

This course is designed for experienced data professionals skilled at data preparation, modeling, analysis, and visualization, such as the PL-300: Power BI Data Analyst certification. Learners should have prior experience with one of the following programming languages: Structured Query Language (SQL), Kusto Query Language (KQL), or Data Analysis Expressions (DAX).

Prerequisites

To benefit fully from the course, delegates should possess the following foundational knowledge and skills:

Data Analytics Fundamentals:

- Basic understanding of data analysis concepts and methodologies.

- Familiarity with data lifecycle processes (ingestion, transformation, storage, and visualization).

Database Knowledge:

- Experience working with relational databases and querying using SQL.

- Awareness of database schema concepts (e.g., tables, joins, and relationships).

ETL Processes:

- Familiarity with Extract, Transform, Load (ETL) workflows.

- Understanding the role of ETL tools in data integration.

Cloud Data Platforms:

- Awareness of cloud-based data solutions, preferably Microsoft Azure or equivalent.

- Basic knowledge of data lake and data warehouse concepts.

Power BI Basics:

- Exposure to Power BI for creating dashboards and reports.

- Understanding measures, calculated columns, and relationships in Power BI.

Programming Concepts:

- Basic experience with a programming language such as Python or R (helpful for data transformation and Apache Spark modules).

- Understanding of DAX (Data Analysis Expressions) and its application in Power BI.

Security and Permissions:

- Familiarity with data security principles such as row-level and column-level security.

- Awareness of access control mechanisms in data platforms.

Business Context:

- Ability to interpret business requirements and translate them into analytics use cases.

These prerequisites will help delegates engage effectively with the course material, navigate the tools and technologies in Microsoft Fabric, and apply the learnings to real-world scenarios.

Outline

Module 1: Explore end-to-end analytics with Microsoft Fabric

- Describe end-to-end analytics in Microsoft Fabric.

- Understand data teams and roles that use Fabric.

- Describe how to enable and use Fabric.

Module 2: Get started with lakehouses in Microsoft Fabric

- What is a lakehouse?

- Load data into a lakehouse

- Explore, transform, and visualize data in the lakehouse

Module 3: Ingest data with Dataflow Gen2 in Microsoft Fabric

- Understand Dataflow Gen2

- Dataflow Gen2 benefits and limitation

- Explore Dataflow Gen2

- Integrate Dataflow Gen2 and pipelines

Module 4: Orchestrate processes and data movement with Microsoft Fabric

- Pipelines in Microsoft Fabric

- Common Activities – copy data

- Use templates for common activities

- Run and monitor pipelines

Module 5: Use Apache Spark in Microsoft Fabric

- What is Apache Spark?

- How to use Apache Spark

- Prepare to use Apache Spark

- Run Spark in Fabric

- Load data in a Spark Dataframe

- Transform data in a Spark Dataframe

- Partition the output file

- Visualize data

Module 6: Get started with data warehouses in Microsoft Fabric

- Data warehouse fundamentals

- Understand Fabric warehouses

- Create a data warehouse in Fabric

- Design a data warehouse

- Data warehouse schema design

- Query data

- Visualize queries

- Build relationships

- Security overview, workspace and item permissions

Module 7: Load data into a Microsoft Fabric data warehouse

- Understand ETL (Extract, Transform and Load)

- Load dimension tables

- Load data with Fabric pipelines

- Configure the copy data assistant

- Load data with Dataflows Gen2

- Transform data with Copilot

Module 8: Query a data warehouse in Microsoft Fabric

- Aggregate measures by dimension attributes

- Joins in a snowflake schema

- Run queries and export results

- Explore visual query editor

- Connect with SQL clients

- DAX calculations

- Join in a snowflake schema

Module 9: Monitor a Microsoft Fabric data warehouse

- Monitor capacity metrics

- Warehouse operation categories

- Timepoint explore graph

- Usage considerations

Module 10: Secure a Microsoft Fabric data warehouse

- Dynamic Data Masking

- Row-level security

- Column-level security

- Granular permissions using T-SQL

Module 11: Add measures to Power BI semantic models

Understand implicit and explicit measures.

Create measures, calculated columns, and calculated tables.

Identify when to use a measure or calculated column.

Module 12: Design scalable semantic models

Choose appropriate storage modes for your semantic model.

Enable large semantic model storage format and incremental refresh.

Create relationships between tables in a semantic model.

Design dynamic elements to extend calculations in a semantic model.

Module 13: Optimize a model for performance in Power BI

- Review the performance of measures, relationships, and visuals.

- Improve performance by reducing cardinality levels.

- Optimize DirectQuery models with table level storage.

- Create and manage aggregations.

Module 14: Create and manage Power BI assets

- Create core and specialized semantic models.

- Create Power BI Template and Power BI Project files.

- Use lineage view and endorse data assets in Power BI service.

- Use XMLA endpoint to connect semantic models.

Module 15: Enforce semantic model security

- Restrict access to Power BI model data with RLS.

- Restrict access to Power BI model objects with OLS.

- Apply good development practices to enforce Power BI model security.

Module 16: Get started with Real-Time Intelligence with Microsoft Fabric

- Use basic syntax and of Kusto Query Language (KQL) and querysets.

- Describe how to execute T-SQL queries in the Queryset canvas.

- Copy, export, and share data results.

Module 17: Secure data access in Microsoft Fabric

- Understand Fabric security model

- Configure workspace and item permissions

- Apply granular permissions

Module 18: Administer Microsoft Fabric

- Describe Fabric admin tasks

- Navigate the admin center

- Manage user access

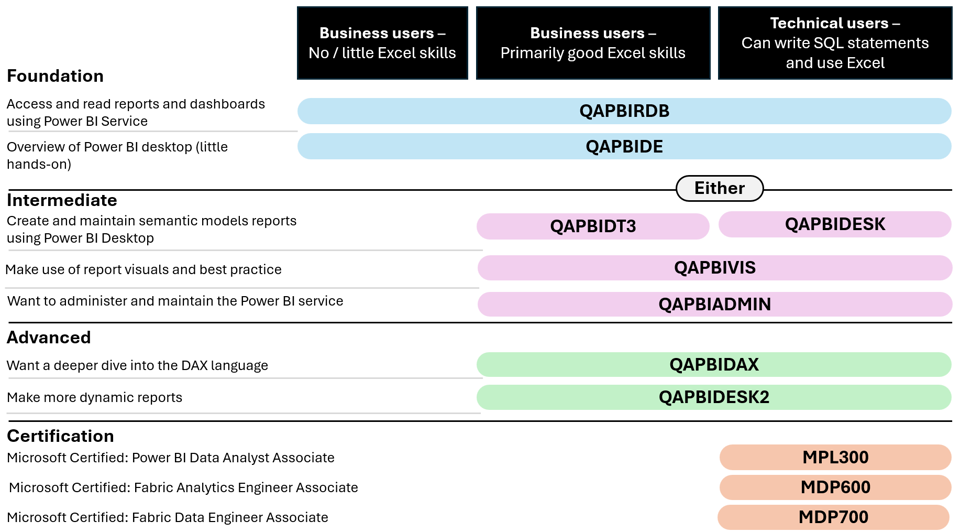

Power BI course selector infographic

| Course code | Course title |

| QAPBIRDB | Microsoft Power BI Reports and Dashboards for Business Users |

| QAPBIDE | Microsoft Power BI Desktop Essentials |

| QAPBIDT3 | Power BI Desktop for Business Users |

| QAPBIDESK | Power BI Desktop for Technical Users |

| QAPBIDESK2 | Power BI Desktop Intermediate |

| QAPBIVIS | Power BI Data Visualisation |

| QAPBIDAX | Using DAX in Power BI |

| MPL300 | Microsoft Power BI Data Analyst |

| MDP600 | Microsoft Fabric Analytics Engineer |

Frequently asked questions

How can I create an account on myQA.com?

There are a number of ways to create an account. If you are a self-funder, simply select the "Create account" option on the login page.

If you have been booked onto a course by your company, you will receive a confirmation email. From this email, select "Sign into myQA" and you will be taken to the "Create account" page. Complete all of the details and select "Create account".

If you have the booking number you can also go here and select the "I have a booking number" option. Enter the booking reference and your surname. If the details match, you will be taken to the "Create account" page from where you can enter your details and confirm your account.

Find more answers to frequently asked questions in our FAQs: Bookings & Cancellations page.

How do QA’s virtual classroom courses work?

Our virtual classroom courses allow you to access award-winning classroom training, without leaving your home or office. Our learning professionals are specially trained on how to interact with remote attendees and our remote labs ensure all participants can take part in hands-on exercises wherever they are.

We use the WebEx video conferencing platform by Cisco. Before you book, check that you meet the WebEx system requirements and run a test meeting to ensure the software is compatible with your firewall settings. If it doesn’t work, try adjusting your settings or contact your IT department about permitting the website.

How do QA’s online courses work?

QA online courses, also commonly known as distance learning courses or elearning courses, take the form of interactive software designed for individual learning, but you will also have access to full support from our subject-matter experts for the duration of your course.

Once you have purchased the Online course and have completed your registration, you will receive the necessary details to enable you to immediately access it through our e-learning platform and you can start to learn straight away, from any compatible device. Access to the online learning platform is valid for one year from the booking date.

All courses are built around case studies and presented in an engaging format, which includes storytelling elements, video, audio and humour. Every case study is supported by sample documents and a collection of Knowledge Nuggets that provide more in-depth detail on the wider processes.

When will I receive my joining instructions?

Joining instructions for QA courses are sent two weeks prior to the course start date, or immediately if the booking is confirmed within this timeframe. For course bookings made via QA but delivered by a third-party supplier, joining instructions are sent to attendees prior to the training course, but timescales vary depending on each supplier’s terms. Read more FAQs.

When will I receive my certificate?

Certificates of Achievement are issued at the end the course, either as a hard copy or via email. Read more here.